Introduction

After a long period of silence I am now going to write a post for hacking Solidity smart contracts for dummies (like me). The easiest way to pen-test Solidity smart contracts is through

REMIX!!!!! This post provides an introduction to the world of smart contract security for people with a background in traditional cyber security and little knowledge of crypto and blockchain tech. While other smart contract platforms exist, we will be focusing on Ethereum, which is currently the most widely adopted platform.

The Landscape In Smart Contracts

When you will start hacking smart contracts, you will instantly understand that there is NO DOCUMENTATION or NOOOOOO TUTORIALS or NO NOTHING. Do not get depressed, Elusive Thoughts is here to help you!!!.

Web Apps Versus DApps

On my quest to Solidity hacking, I found this awesome info on arvanaghi (see references below) so enjoy. When your browser interacts with a regular web application, the web app might speak to other internal servers, databases, or a cloud.

In the end, the interaction is simple:

In a DApp, most interactions are the same. But there’s a third element: the smart contract, which is publicly accessible. Some interactions with the web application will lead to either a read or a write to one or multiple smart contracts on the Ethereum blockchain.

Because smart contracts are publicly accessible, we can interact with them directly, unimpeded by the web server logic that might limit what transactions we can issue.

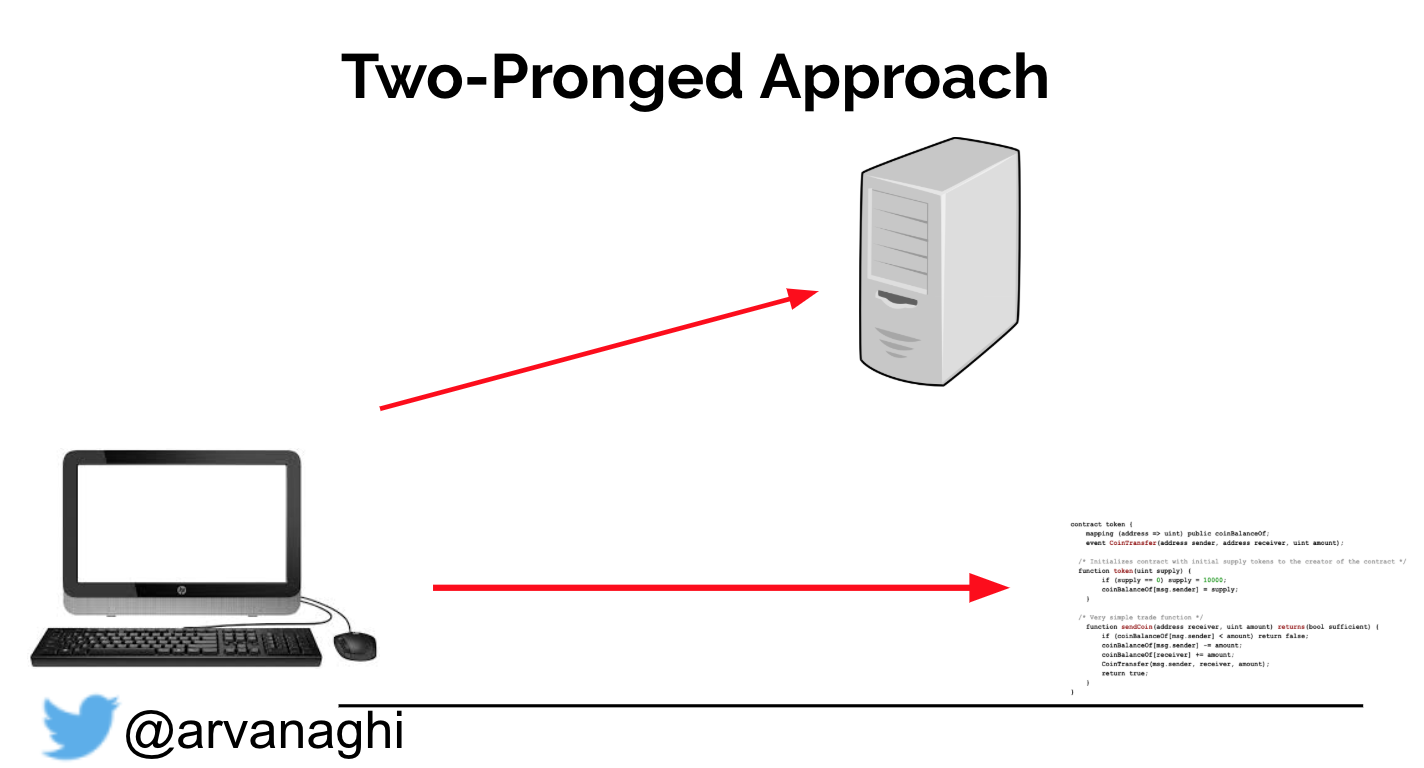

So far, we have a two-pronged approach to our hacking quest:

- A standard web application we can hack by simply exploiting, authentication, access controls, and session management etc.

- A DApp application has a Web Component and the smart contract audit element.

Note: In other words, we check for logical and input validation errors in both the web application and the smart contract logic.

Below we can visualize the flows described:

Note: In order to interact with the chain directly, you can use a wallet such as Metamask.

Hacking DApps

When you create a Smart Contract, some kind of message is sent to the Blockchain. This message allows you to access your contract. So that no one else but the creator of the Smart Contract can change anything (become a super user!!). We essentially create a new variable of type address called “owner”. In Solidity language we use modifiers to expand the mentioned concept by altering function execution flow.

In Solidity, modifiers express what actions are occurring in a declarative and readable manner. They are similar to the decorator pattern used in Object Oriented Programming. In Solidity functions are tagged with the label modifier to control access, or put in other words, modifiers are used to modify the behavior of a function. For example you can add a prerequisite to a function, in order to execute.

Below we can see a simple modifier example:

modifier onlyOwner {

require(msg.sender == owner);

// The message sender has to be the owner (msg.sender is an environment variable )

_;

// Execution body from function to alter.

}

Note: Here we define a modifier.

Below we can see how modifier is used (through function tagging):

function writeData(bytes32 data) public onlyOwner returns (bool success) {

// will only run if owner sent transaction

}

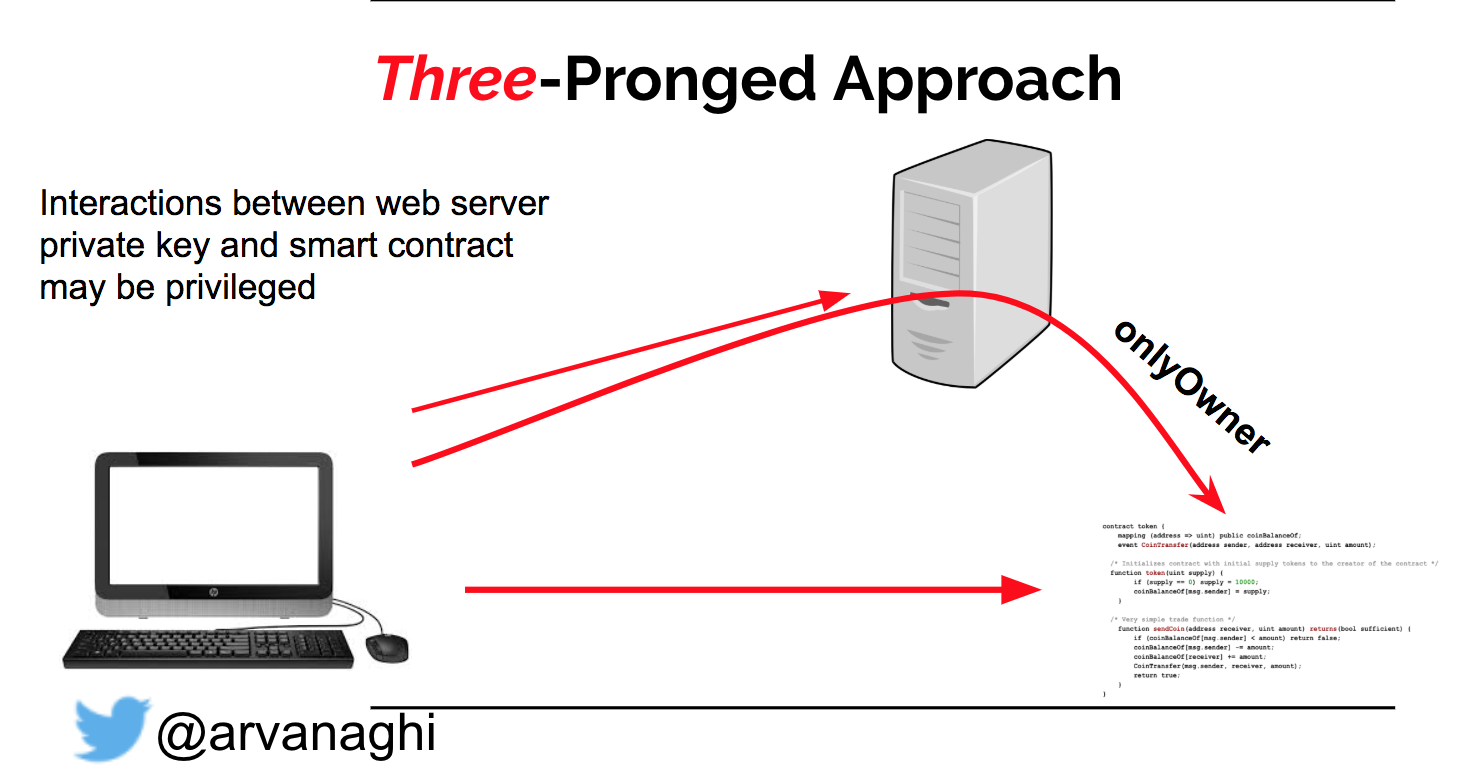

Because of modifiers, though, there’s actually a third prong to our attack we can have to consider.

Below we can see the flow of what we just described:

Because DApps deal with Ethereum accounts, they are based on public key authentication. Not password authentication is used most of the time (serious DApps use JWT tokens in conjunction with the public key authentication). Though we can interact directly with the smart contract, we can’t execute functions when modifiers like onlyOwner are properly implemented.

This means that the server is acting as a middle man, between you and the blockchain, or put simple, the "wallet of the DApp server" has the address of the owner (not always) and the private keys and you don't. A DApp, with proper security architecture does not follow the mentioned design patterns. Meaning that if we implement proper RBAC we are good to go. Also the majority of DApp now is still using the mentioned bad design patterns.

When dealing with a DApp, the private keys to these privileged addresses almost certainly exist on the web server. And the web application almost certainly has logic that takes user input over the web and calls a privileged function in the smart contract using one of those keys.

All this considered, we have our attack surface:

- A standard web application assessment requires to test for typical OWASP Top 10 vulnerabilities and you are covered.

- A smart contract audit of the source code.

- Attempting to forge privileged writes to the smart contract through the web interface. Can you get the web application to interact with the smart contract in a way it didn’t expect?

So to test the DApp, we would have to use a Web Proxy such as Burp and also review and test the Solidity code (perform both dynamic and static code analysis). Because I dedicated my life writing post on web app testing, I am going to skip the web testing. But what you should remember is that always check key privilege checks, when testing DApps.

Note: Also if you try to hack legitimate DApps, without permission, you should now that it is illegal and escaping with the funds is not easy, you are going to get caught in a tornado mesh.

Solidity Tools For Hacking

Tops tools for automating part of the Smart Contract pen-test are:

- REMIX - Remix IDE is used for the entire journey of smart contract development by users at every knowledge level. It requires no setup, fosters a fast development cycle and has a rich set of plugins with intuitive GUIs. The IDE comes in 2 flavors (web app or desktop app) and as a VSCode extension.

- VSCode - Visual Studio Code is a lightweight but powerful source code editor which runs on your desktop and is available for Windows, macOS and Linux. It comes with built-in support for JavaScript, TypeScript and Node.js and has a rich ecosystem of extensions for other languages and runtimes (such as C++, C#, Java, Python, PHP, Go, .NET).

- Slither - Slither is a Solidity static analysis framework written in Python 3. It runs a suite of vulnerability detectors, prints visual information about contract details, and provides an API to easily write custom analyses. Slither enables developers to find vulnerabilities, enhance their code comprehension, and quickly prototype custom analyses.

- Mythril - Mythril is a security analysis tool for EVM bytecode. It detects security vulnerabilities in smart contracts built for Ethereum, Hedera, Quorum, Vechain, Roostock, Tron and other EVM-compatible blockchains. It uses symbolic execution, SMT solving and taint analysis to detect a variety of security vulnerabilities. It's also used (in combination with other tools and techniques) in the MythX security analysis platform.

Setting Up The Hacking Environment

The biggest pain on Solidity Smart contract testing is to configure and add the libraries, so as for the project to run smoothly. Not anymore, here see an easy way to do it.

Step One: Load the project from Github to Remix :- In the top left corner clieck Clone Git Repository.

Note: Most of the time the client will give you a public git repo URL to load the code (or a private git repo URL). Now with Remix, you can load that it directly.

Note: When you upload confidential information in Remix, you better have the permission or the code is patented.

Step Two: Compile your code and troubleshoot :-

Note: It does worth mentioning that the Remix compiler will also generate the relevant project files. Which you can download and use on your workstation with some modifications.

Step Three: Fixing import errors:-

In order to resolve the issue we refer to the online tutorial REMIX guide found

here. Which says more or less to replace the original path:

import "../../utils/introspection/IERC165.sol";

with

https://github.com/OpenZeppelin/openzeppelin-contracts/blob/master/contracts/utils/introspection/IERC165.sol

And then taddaaaaaa magic happens, the code is compiles!!!

Remix Debugging and Security Plugins

Remix besides an online compiler has also awesome!!!! plugins, including that of static analysis for debugging. Static code analysis is a process to debug the code by examining it and without actually executing the code. Solidity Static Analysis plugin performs static analysis on Solidity smart contracts once they are compiled. It checks for security vulnerabilities and bad development practices, among other issues. It can be activated from Remix Plugin Manager.

Another plugin you would like to also install is MythX by ConsenSys. MythX is a security analysis service and performs Static and Dynamic Security Analysis using the MythX Cloud Service. MythX offers a suite of analysis techniques that automatically detects security vulnerabilities in Ethereum smart contracts.

Note: Mythx requires an API key to work with Remix.

Step Four: Installing the static analysis plugin. In order to install the plugin, go in the left bottom corner.

Step Five: Use the search manager to find the plugins you like to do your hacks (type static analysis).

Note: There are numerous plugins you can choose from to install. Search the ones you like.

Below we can see the plugins to install (static analysis):

Below we can see the plugins to install (MYTHX):

Note: The plugin has dependency Solidity Compiler plugin, you need to activate in also.

The static analysis plugin checks for the following security issues:

The plugin static analysis also looks for non-security issues. Some of the issues shown below can be used to perform Gas Denial of Service attacks so pay attention!!!!!:

The report generated by the static analysis plugin will look like this:

Note: Some of the findings can be false positives, so take care. Make sure you

untick the external library option if the libraries are trusted.Interacting From Your Machine With Remix

The next step would be, to see how we can interact with Remix, locally from our machine. For a complex project, you can't just copy paste the single sol file and let it run. To make our life easier, Remix has localhost connection which allows you to interact with your project in your local machine remotely. This is something I'm used to doing when the project has a large number of inheritant contracts. Obviously, this make our life easier than ever by just downloading the git project and do some commands.

remixd is a tool that intend to be used with Remix IDE (aka. Browser-Solidity). It allows a websocket connection between Remix IDE (the DApp) and the local computer. You can also use Burp to intercept the remixd traffic (although is not going to be easy). Practically Remix IDE makes available a folder shared by remixd. If you would like to install the tool follow the steps below (remixd needs npm and node).

yarn global add @remix-project/remixd

Note: Alternatively remixd can be used to setup a development environment that can be used with other popular frameworks like Embark, Truffle, Ganache, etc. The command above will work in most linux distributions.

Step Six: Go to WorkSpaces and click "Connect to Localhost"

Step Seven: Check the remixd version and connect.

Note: The message box pops up and you just need to read carefully and copy the command shown in the box to connect your localhost. The parameter -u in the remixd is a copy paste of the workspace you actively using (if you moved in the tab workspace).

The remixd command for my workspace is:

remixd -s localWorkspace/ -u https://remix.ethereum.org

Note: remixd provides full read and write access to the given folder for any application that can access the TCP port 65520 on your local host. To minimize the risk, Remixd can ONLY bridge between your filesystem and the Remix IDE URLS - including:

- https://remix.ethereum.org

- https://remix-alpha.ethereum.org

- https://remix-beta.ethereum.org

- package://a7df6d3c223593f3550b35e90d7b0b1f.mod

- package://6fd22d6fe5549ad4c4d8fd3ca0b7816b.mod

- https://ipfsgw.komputing.org

Note: In the terminal where remixd is running, typing ctrl-c will close the session. Remix IDE will then put up a modal saying that remixd has stopped running. If you want to kill the remixd, just run killall node from the terminal you launched the tool.

Remixd and Slither (automated security scans)

The end goal of this lengthy post is to make you able to run remotely automated scans with tools such as slither. When remixd NPM module is installed, it also installs Slither and solc-select and latest version of solc.

If you run the following command in your Linux box, it will work 90% of the time:

remixd -i slither

Note: The command above will take care all the dependencies.

If a project is shared through remixd and localhost workspace is loaded in Remix IDE, there will be an extra checkbox shown in Solidity Static Analysis plugin with the label:

Note: When you connect to localhost, remixd will create an empty workspace. Use it to add the sol files. Also

References: